How the Automated SEO Audit Works — From URL to Branded PDF

A step-by-step look at how the automated SEO audit crawls your site, scores 40 checks across 12 categories, maps your site architecture, and delivers a prioritised strategy — all in under 24 hours.

What Happens When You Request an Audit

You submit your URL. Within 24 hours, you receive a branded PDF covering 40 checks across 12 categories — technical SEO, on-page quality, site architecture, and AI readiness — with a prioritised strategy telling you exactly what to fix and in what order.

This is the mid-range audit. It replaces a traditional 7-10 hour manual SEO audit. The difference is that every step is automated, every finding is scored by business impact, and the output is consistent regardless of site size.

The audit works with WordPress and Shopify and most other website builds up to 1,000 pages. It does not currently support Magento sites.

Here is exactly what happens between submitting your URL and receiving your report.

Stage 1: Discovery — Finding Every Page on Your Site

The system starts by reading your robots.txt file and parsing your XML sitemap. This gives it a seed list of every URL your site declares publicly.

But sitemaps are often incomplete. So the system also follows every internal link it finds during the crawl, adding undiscovered pages to the queue. This catches pages your sitemap missed — and on most sites, that is a significant number.

What this means for you: Nothing is missed. If a page exists on your site and is reachable via a link, the audit will find it and check it. One real audit discovered 895 pages on a site whose sitemap only declared 366.

Stage 2: Browser-Based Crawl — Seeing Your Site Like Google Does

Every page is loaded in a real Chromium browser with full JavaScript execution. This is not a simple HTTP request — it renders your site the same way Google and your visitors see it.

For each page, the system captures:

- Title tags and meta descriptions — presence, length, uniqueness

- Heading structure — H1 through H6 hierarchy

- Images — every image source and whether it has alt text

- Internal and external links — where each page links to and from

- Schema markup — JSON-LD structured data and validation

- Content — word count, keyword density, content depth

- Security headers — HTTPS, HSTS, redirect chains

What this means for you: You get the same depth of technical data as a senior SEO using Screaming Frog — but captured automatically across your entire site in minutes rather than hours.

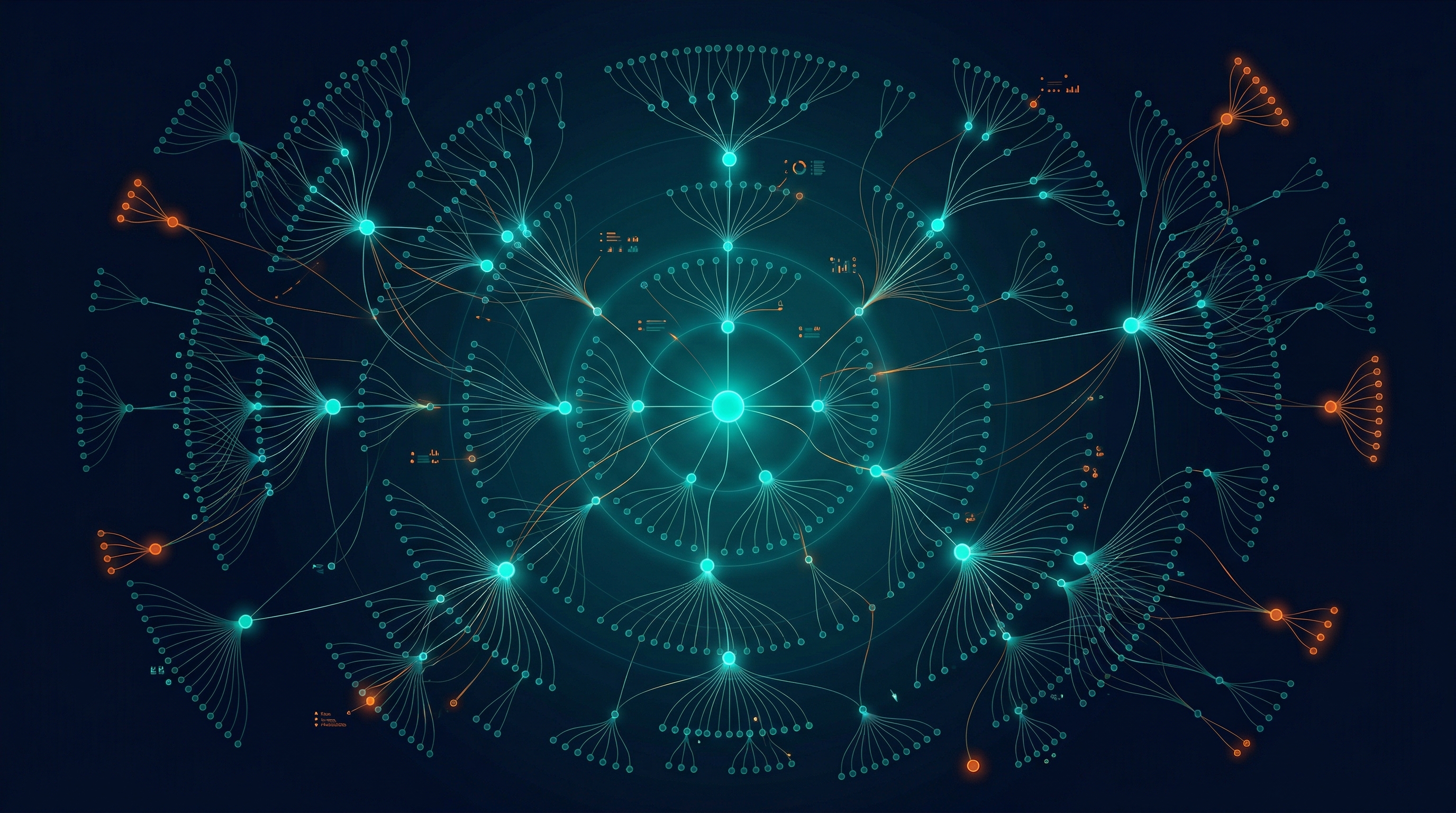

Stage 3: Site Architecture Graph — Mapping How Your Pages Connect

As the crawl runs, the system builds a directed link graph — a map of every internal link between every page on your site. This is where the audit goes beyond a flat crawl.

The graph reveals:

- Orphan pages — pages with zero inbound internal links that Google struggles to find

- Crawl depth — how many clicks it takes to reach each page from your homepage

- Dead-end pages — pages that link to nothing, creating authority sinks

- Hub pages — the navigation backbone that distributes link equity across your site

- Internal PageRank — how authority flows through your site structure

What This Looks Like in Practice

Here is a real site architecture analysis from a recent audit (anonymised):

| Metric | Value |

|---|---|

| Total pages discovered | 450 |

| Internal links mapped | 9,912 |

| Orphan pages | 0 |

| Dead-end pages | 0 |

| Max crawl depth | 4 |

| Avg crawl depth | 2.6 |

This site had excellent architecture — every page was reachable within 4 clicks and no pages were orphaned.

Compare that to another real audit:

| Metric | Value |

|---|---|

| Total pages discovered | 110 |

| Internal links mapped | 1,738 |

| Orphan pages | 46 |

| Dead-end pages | 0 |

| Max crawl depth | 2 |

| Avg crawl depth | 1.2 |

This site had 46 orphan pages — 42% of its content was invisible to search engines because nothing linked to it. That is the kind of structural issue a flat crawl tool like Screaming Frog does not detect.

What this means for you: You see exactly how Google navigates your site. Orphan pages, buried content, and authority bottlenecks are identified automatically — issues that most manual audits miss entirely.

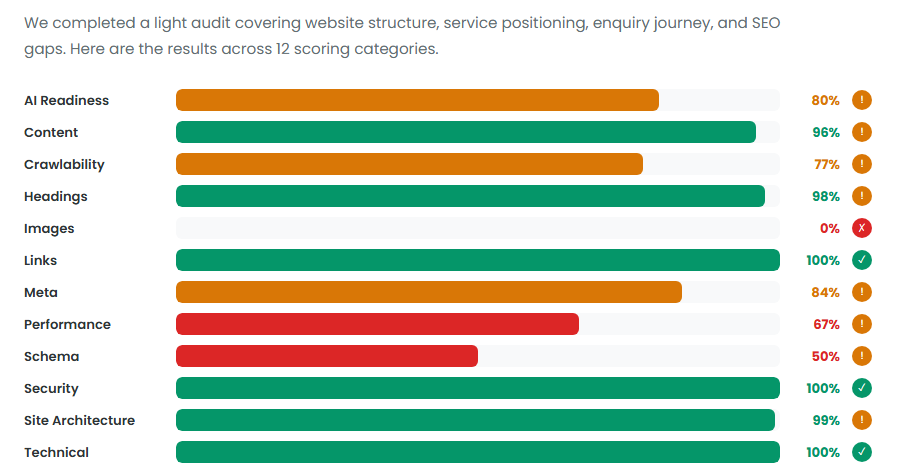

Stage 4: The 40-Check Rule Engine — Scoring Every Page

Every crawled page is checked against 40 rules across 12 categories. Each rule returns a pass or fail, the list of affected pages, a severity rating (critical, warning, or info), and a specific recommendation.

Here is the actual category breakdown from a real audit:

The 12 Categories

| Category | What It Checks |

|---|---|

| Meta | Title tags, meta descriptions, canonical tags, Open Graph, meta robots |

| Headings | H1 presence and uniqueness, heading hierarchy |

| Images | Alt text coverage across every image on every page |

| Links | Broken internal links, redirect chains |

| Crawlability | robots.txt configuration, sitemap coverage, noindex conflicts, indexability |

| Schema | JSON-LD presence and field validation |

| Security | HTTPS coverage, HSTS headers, HTTP-to-HTTPS redirects |

| Performance | Core Web Vitals, redirect chain depth |

| Content | Thin content detection, duplicate titles, duplicate descriptions, keyword stuffing |

| AI Readiness | AI bot access, llms.txt, AI-friendly schema, content structure, entity clarity |

| Site Architecture | Orphan pages, crawl depth, dead ends, hubs, PageRank, equity flow, clusters, bottlenecks |

| Technical | HTTP status codes, redirect analysis |

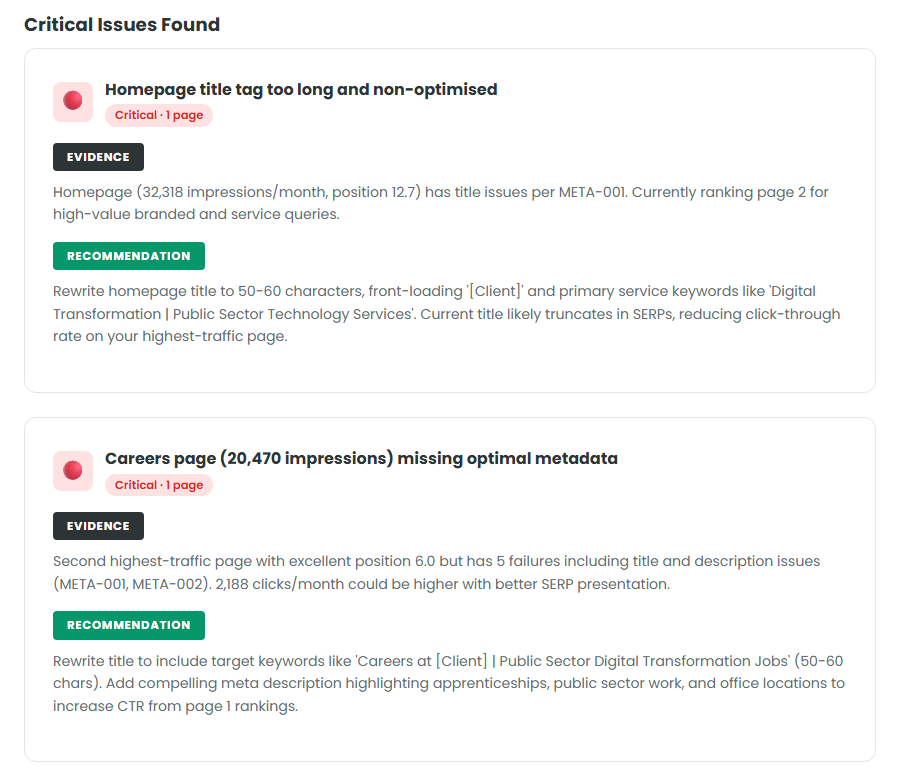

Here is what a critical finding looks like in the report:

Every issue comes with evidence (what the data shows), severity (how urgently to fix it), and a specific recommendation (what to do). You are not left interpreting raw data.

Stage 5: AI Readiness Layer — Can AI Search Engines Find You?

This is the layer that most audits do not include. It checks whether AI-powered search engines — ChatGPT, Perplexity, Google AI Overviews, Claude — can access and understand your content.

The AI readiness checks include:

- AI bot access — Are GPTBot, ClaudeBot, PerplexityBot, and 6 other AI crawlers allowed in your robots.txt?

- llms.txt — Does your site have an llms.txt file that describes your business to AI models?

- Schema for AI — Does your structured data help AI models understand what your business does?

- Content structure — Are your pages structured so AI models can extract and cite specific answers?

- Entity clarity — Can AI models clearly identify what your business is, what you offer, and who you serve?

Real AI Readiness Finding

From a recent audit:

AI Readiness Score: 68%

All 9 major AI bots are allowed in robots.txt — you are ahead of most competitors here. However, no llms.txt file exists, schema markup has 311 validation errors across 49 pages, and 58% of pages lack the heading structure AI models need to extract answers.

What this means for you: As search shifts toward AI-generated answers, your site needs to be structured so AI models can understand and cite your content. This layer tells you exactly where you stand and what to fix.

Stage 6: Scoring and Grading — One Number That Tells the Story

Every finding is scored by severity and weighted by business impact. The system produces an overall health score, per-category scores, and a severity breakdown.

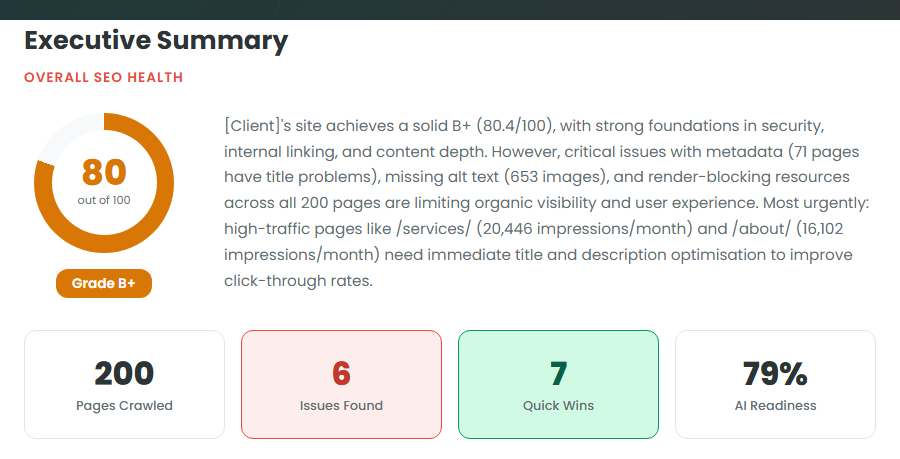

Here is the executive summary from a real audit:

The grade gives you an instant benchmark. The category breakdown tells you where to focus.

What this means for you: You do not need to interpret 40 individual checks. The scoring system does the prioritisation for you — critical issues first, then warnings, then improvements.

Stage 7: AI-Generated Strategy — Not Just Problems, Solutions

The audit data is fed into an AI model that generates a strategic interpretation of your results. This is not a template — every strategy is written specifically for your site based on your actual data.

The strategy includes:

- Executive summary — a plain-English assessment of your site’s SEO health

- What’s working — the things you should not change, with evidence

- Critical issues — the problems costing you traffic right now, ranked by impact

- Quick wins — low-effort, high-impact fixes you can action immediately

- 30/60/90 day roadmap — a prioritised action plan broken into sprints

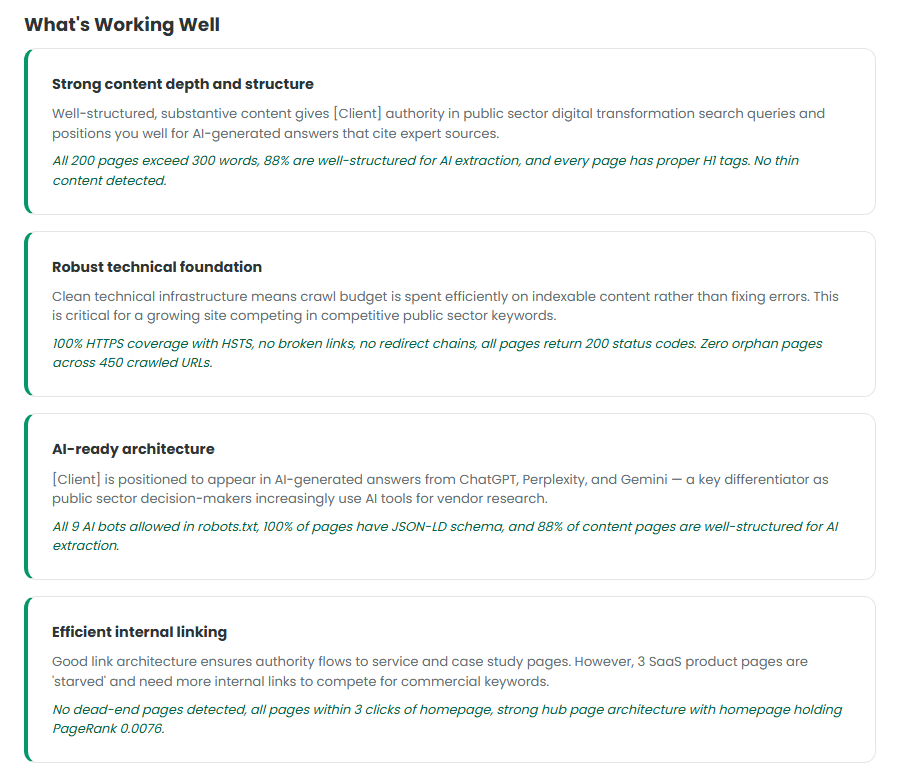

Here is what “What’s Working Well” looks like from a real audit:

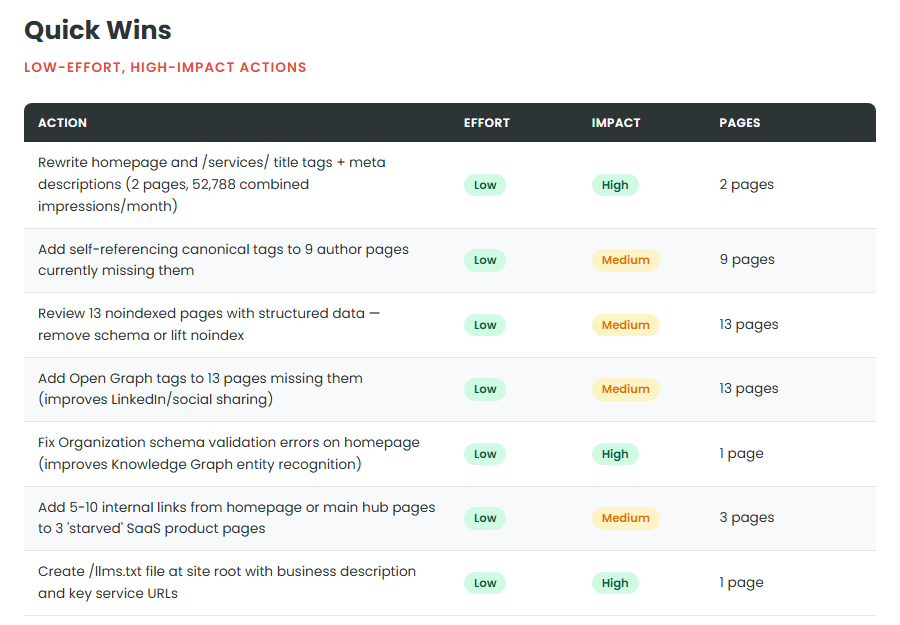

And here are the Quick Wins — low-effort, high-impact fixes:

What this means for you: You receive an actionable strategy that tells your team exactly what to do, in what order, and why. It is the difference between a data dump and a roadmap.

Stage 8: 90-Day Roadmap — Phased Action Plan

Every finding is prioritised into a phased roadmap: what to fix this week, this month, and this quarter.

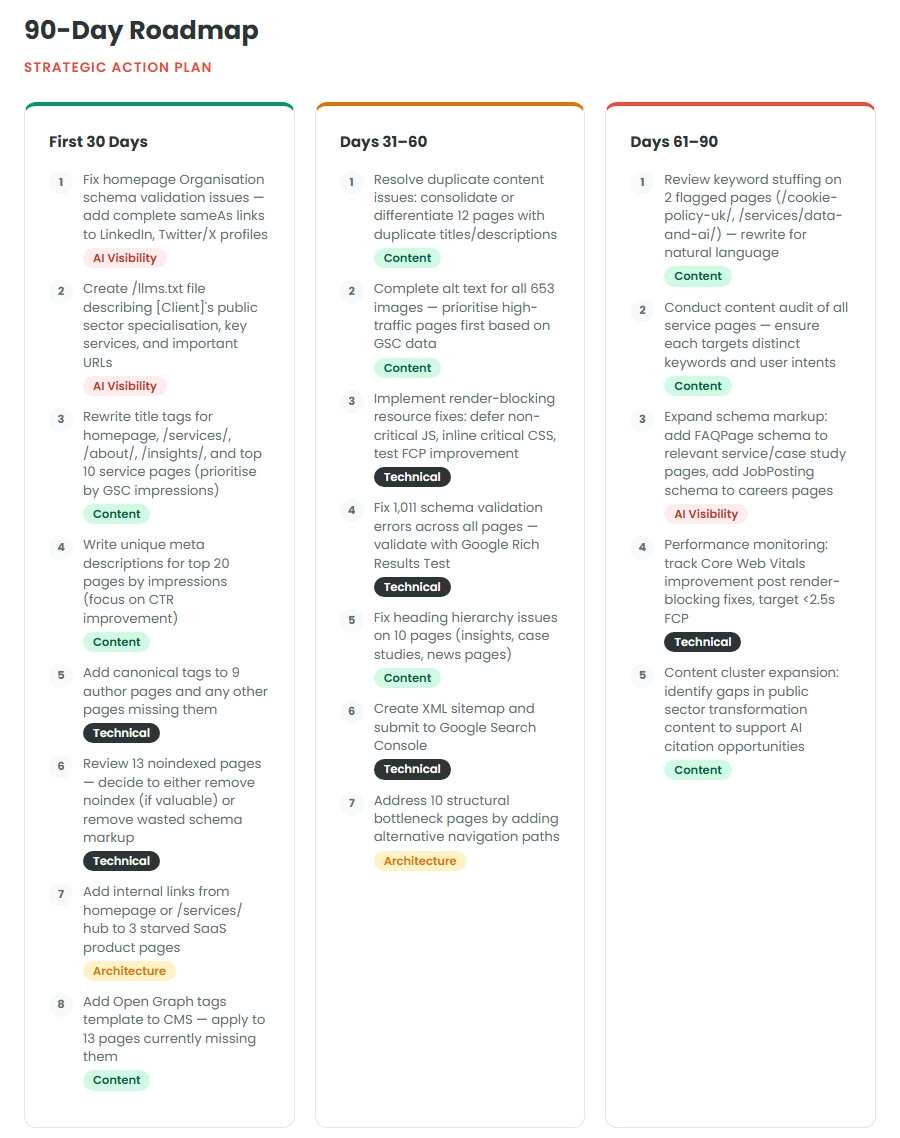

Here is a real 90-day roadmap from an audit:

One audit generated 289 individual tasks across 29 sprints — every single fix mapped out so your team can work through them methodically.

What this means for you: Your developers and content team receive specific, actionable tasks they can pick up immediately. No interpretation needed. No ambiguity about what to change.

Stage 9: Branded PDF Delivery

Everything is compiled into a branded PDF with your logo and colours. If you are an agency, your client sees a professional deliverable from your brand — they never see BrightIQ.

The final report includes:

- Cover page with your branding

- Executive summary and overall score

- Category-by-category breakdown with scores and findings

- Site architecture analysis with graph metrics

- AI readiness assessment with specific recommendations

- Prioritised strategy with 30/60/90 day roadmap

- Sprint-based action plan with every fix mapped out

I review the strategy for accuracy before delivering. A 15-minute walkthrough call is included with every audit.

The Numbers

| Traditional Manual Audit | Automated SEO Audit |

|---|---|

| 7-10 hours of consultant time | Delivered in under 24 hours |

| £1,500-3,000+ at agency rates | £297 flat rate |

| Covers 20-50 pages manually | Same depth on sites up to 1,000 pages |

| Depends on who runs it | Consistent output every time |

| No site architecture graph | Full PageRank and link equity analysis |

| No AI readiness checks | 5 AI readiness rules included |

| Static recommendations | AI-generated strategy unique to your site |

| Spreadsheet or slide deck | Sprint-based action plan with every fix mapped |

What Happens Next

- Request your audit — submit your URL and I will confirm within 24 hours

- Optional: Share Google Search Console access — this enriches the strategy with real traffic data (impressions, clicks, top queries)

- Receive your branded PDF — the full report with scores, findings, strategy, and action plan

- 15-minute walkthrough call — I walk you through the findings and answer questions

- Your team starts fixing — using the sprint-based action plan as a task list

Book your Automated SEO Audit and see exactly what your site is doing.

Book Your Automated SEO Audit

This is a supporting guide for my Automated SEO Audit service. See the full service details, pricing, and what's included.